Why Single-Model AI Fails at E-Commerce — And What We Built Instead

Why Single-Model AI Fails at E-Commerce — And What We Built Instead

Keywords: multi agent ai ecommerce, composable agent patterns, multi-agent system design, ecommerce ai analytics

Introduction

Most "AI-powered" e-commerce tools are a single prompt wrapped in a nice UI. They look at one thing at a time — your pricing, your email open rates, your catalogue gaps — and produce a summary. They never connect the dots between domains.

That's not analysis. That's reporting with extra steps.

We built Signal — a multi-agent system where five specialist agents each analyse a different part of an e-commerce business, then a synthesis agent connects insights across all of them. The result is cross-domain diagnosis that no single model can produce, because the most valuable insights live in the space between data sources.

This article explains why single-model AI fails at e-commerce analytics, how composable agent architecture solves the problem, and the design principles from Anthropic's agent research that shaped our approach.

The Single-Model Problem

A single AI model looking at your store data can do one thing well at a time. Give it your order data and it'll tell you revenue is down. Give it your product catalogue and it'll flag dead inventory. Give it your email metrics and it'll note declining open rates.

But it won't tell you that revenue is down because the dead inventory SKUs were the ones in your email campaigns, and open rates dropped because you're promoting products nobody wants anymore. That chain of reasoning requires holding multiple data domains in context simultaneously and looking for cross-domain patterns.

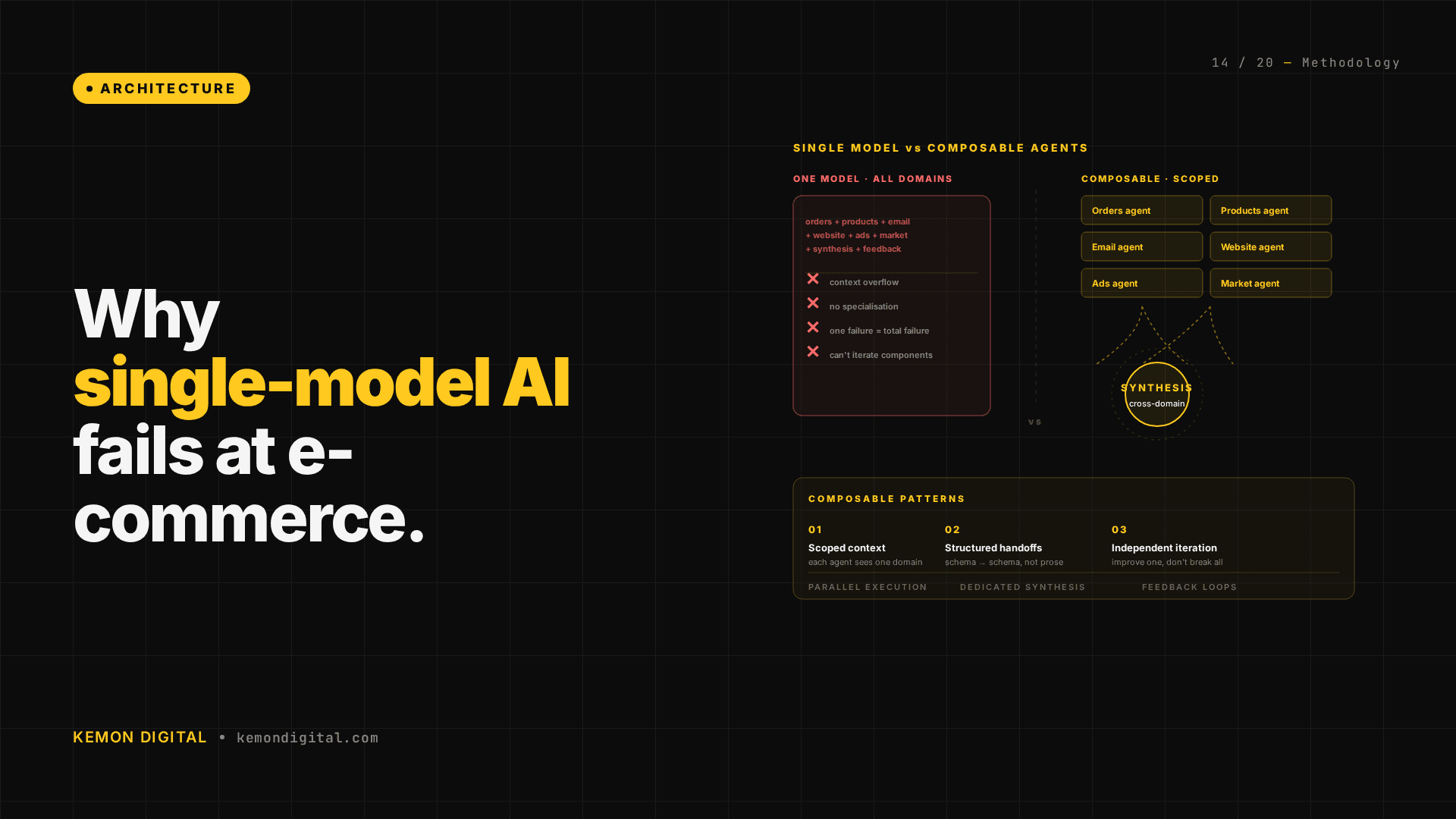

Single-model approaches fail here in three ways:

Context window saturation. E-commerce operations generate enormous volumes of data. Tens of thousands of SKUs, hundreds of campaigns, six-figure email lists. Loading all of that into a single context window either exceeds the limit or degrades reasoning quality as the model tries to attend to everything at once.

Domain expertise dilution. A prompt that says "analyse my orders, products, email, website, and market data" produces surface-level observations across all five domains instead of deep analysis in any one. The model spreads its reasoning thin.

No cross-referencing mechanism. Even if you run five separate analyses sequentially, there's no structured way to combine findings. The insight that connects an order pattern to a pricing change to a competitor move requires a dedicated synthesis step that single-model workflows don't have.

The Architecture: Five Specialists, One Synthesiser

Signal runs five channel agents, each scoped to a single data domain:

Orders agent — revenue patterns, customer segmentation, discount behaviour, return rates, cohort analysis. Reads order export data and produces a structured findings document.

Products agent — catalogue health, pricing architecture, dead inventory detection, margin analysis, category performance. Reads product and inventory data.

Email agent — campaign ROI, automation gaps, list decay, segment performance, deliverability signals. Reads email platform exports.

Website agent — traffic patterns, conversion funnel analysis, UX friction points, content gaps, channel attribution. Reads analytics exports.

Market agent — competitor pricing, market positioning, geographic opportunity, promotional cadence, share-of-search. Reads scraped competitor data and market intelligence.

Each agent runs its own analytical framework — a structured set of questions, evaluation criteria, and output formats designed for its specific domain. The frameworks are refined iteratively; they're not generic prompts.

Then the synthesis agent reads all five outputs and cross-references them. It looks for patterns that only emerge when two or more domains are viewed together.

Where the Insights Actually Live

The most valuable findings are never in a single domain. They're in the intersections.

Orders × Products: The orders agent flags a spike in returns for a specific category. The products agent confirms those SKUs had a recent price increase. The return spike isn't a quality issue — it's a pricing issue. No single-domain analysis catches that.

Email × Website: The email agent reports declining click-through rates on a campaign series. The website agent shows the landing pages those emails link to have a high bounce rate. The email isn't the problem — the destination is. Fix the page, not the subject line.

Market × Products × Orders: The market agent detects a competitor undercutting you by 15% on a high-volume category. The products agent shows your margin on those SKUs is already thin. The orders agent confirms demand is declining. The diagnosis: this is a category to exit or restructure, not defend with more ad spend.

These cross-domain chains are where the ROI of multi-agent architecture justifies itself. A dashboard can show you that returns are up. It takes a multi-agent system to tell you why and what to do about it.

Design Principles: Why Composable Beats Monolithic

Three principles from Anthropic's agent design research shaped this architecture:

1. Start simple, add complexity only when needed. The first version of Signal was three agents, not five. We added the market and email agents only when the synthesis layer consistently needed data those domains provided. Every agent must justify its existence by producing insights the synthesis layer couldn't generate without it.

2. Specialist agents with clear boundaries. Each agent has exactly one job and one data source. The orders agent never looks at product data. The products agent never reads email metrics. This prevents scope creep and makes each agent's output predictable and testable.

3. Orchestrate, don't centralise. The synthesis agent doesn't re-analyse raw data. It reads structured outputs from the five specialists and looks for cross-domain patterns. This keeps the synthesis step fast and focused on what it does uniquely well: connecting dots between domains.

The alternative — one large agent that ingests everything — sounds simpler but fails in practice. It produces shallow analysis because it can't go deep in any one domain while holding all the others in context.

The Feedback Loop

Signal isn't static. After each analysis cycle, findings are reviewed by the operator. Feedback is captured: which insights were actionable, which were noise, which missed something important.

That feedback is processed by a dedicated agent and fed back into the analytical frameworks. Over a dozen iterations in three months, the frameworks have gotten significantly sharper — fewer false positives, more actionable findings, better cross-domain connections.

This is what Anthropic's research calls the evaluator-optimizer pattern. The system evaluates its own output quality through human feedback, then optimises its instruction set for the next cycle. It's not just running analysis — it's learning what good analysis looks like for this specific business.

What This Means for Your Business

If you're evaluating AI analytics tools for e-commerce, the architecture question matters more than the model question. A well-designed multi-agent system on a mid-tier model will outperform a single-prompt approach on the most powerful model available — because the bottleneck is never reasoning quality. It's cross-domain coverage.

Questions worth asking any AI analytics vendor: How many data sources does it analyse simultaneously? Does it cross-reference between domains? Can it explain why something is happening, not just that it happened? Does it learn from feedback?

If the answer to any of those is no, you have a reporting tool, not an analytics system.

FAQ

Q: How many agents do I need?

A: Start with the minimum that covers your critical data domains. For most e-commerce operations, orders and products are non-negotiable. Email, website, and market intelligence are high-value additions. The right number is the fewest agents that give the synthesis layer enough to work with.

Q: Can't I just run five separate ChatGPT conversations?

A: You can run the individual analyses that way. What you can't do is the synthesis step — the structured cross-referencing that connects findings across domains. That requires a dedicated agent reading all five outputs in a single context, with an analytical framework designed for cross-domain pattern detection.

Q: How long does a full analysis cycle take?

A: Under three hours for the full five-agent cycle including synthesis. Most of that time is the agents reading data. The reasoning and cross-referencing is fast.

Q: Does this work for small stores?

A: The architecture scales down. A store with a few hundred SKUs and a handful of campaigns still benefits from cross-domain analysis — the intersections are where the insights live regardless of scale. The implementation is simpler because data volumes are smaller.

Q: What if my data is messy?

A: That's what the data standardisation agent handles. Raw exports from different platforms arrive in different formats, schemas, and timestamp conventions. The standardisation step normalises everything before the channel agents see it. Messy input is expected, not a blocker.