Production AI vs Demo AI: Why the First Draft Is Always Terrible

Production AI vs Demo AI: Why the First Draft Is Always Terrible

Keywords: production ai vs demo ai, ai content generation at scale, prompt engineering ecommerce, guardrails ai production

Introduction

We generated product descriptions for a luxury fashion catalogue. Tens of thousands of SKUs. Multiple languages. Brand-appropriate tone for names like Balenciaga, Marni, and Alexander McQueen.

A human copywriting team would take months. Our system did it in days.

But the first draft was terrible. Not factually wrong. Not off-brand exactly. Just flat. It read like an AI wrote it — because it did. The difference between that first draft and what actually shipped was roughly 30 iterations on the prompt architecture, an extensive QA rule set baked into the system, and a human editor who understands luxury fashion doing a final pass.

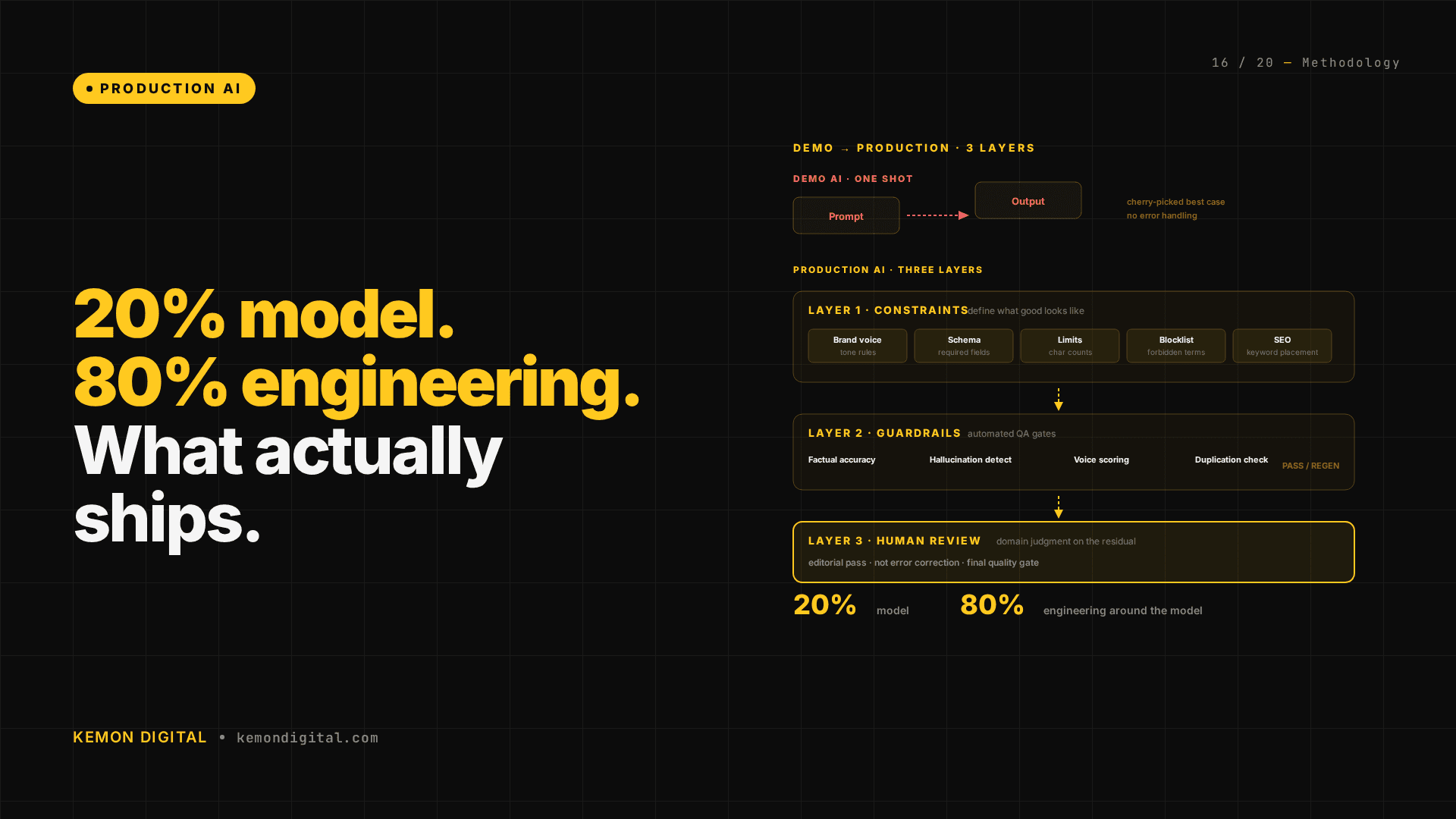

Every AI product that ships is 20% model and 80% engineering around the model. This article explains what that 80% actually looks like.

The Demo Trap

AI demos are impressive. Type a prompt, get a product description, show the client. It takes thirty seconds and looks like magic.

The trap is that demos optimise for the best single output. Production optimises for the worst output across thousands of runs. Those are fundamentally different engineering problems.

A demo can cherry-pick. A production system has to ship every output. The description that's 95% good but casually invents a fabric the garment isn't made of — that's a customer return waiting to happen. The one that sounds generically premium but doesn't match the specific brand's voice — that's a brand relationship issue.

At scale, the question is never "can AI do this?" It's "can AI do this reliably, thousands of times, without producing outputs that damage trust?"

What the 80% Looks Like

The 80% breaks down into three layers: constraints, guardrails, and human review.

Layer 1: Constraints

We don't ask the AI to "write a good product description." We give it a schema:

- Brand voice rules — each luxury brand has documented voice characteristics. Balenciaga is different from Marni is different from Alexander McQueen. The prompt includes brand-specific tone instructions.

- Character limits — different platforms need different lengths. Shopify descriptions, social media snippets, and catalogue entries each have their own constraints.

- Required attributes — every description must include fabric composition, care instructions, sizing context, and key design features. If the source data includes these, they must appear. If it doesn't, the system flags the gap rather than hallucinating.

- Forbidden phrases — a blocklist of language that doesn't belong in luxury fashion. Generic filler. Hyperbolic claims. Anything that sounds like fast fashion marketing.

- SEO requirements — primary keyword in the first sentence, secondary keywords distributed naturally, meta description within character limits.

The more specific the constraints, the better the output. This is counter-intuitive — it feels like you're limiting the AI. You're actually focusing it. A model with creative freedom produces average output. A model with tight constraints produces consistent output.

Layer 2: Guardrails

Guardrails are the automated QA checks that run on every generated output before it reaches human review:

- Factual accuracy check — does the description match the source product data? If the garment is 100% wool, the description can't mention cotton. This is a programmatic cross-reference, not an AI judgment call.

- Brand voice scoring — a separate evaluation pass that scores the output against the brand voice rubric. Outputs below threshold get regenerated with additional guidance.

- Hallucination detection — does the description mention features, materials, or design elements not present in the source data? If so, flag and regenerate.

- Length and format validation — mechanical checks on character counts, required sections, and formatting standards.

- Duplication detection — across thousands of descriptions, the AI tends to reuse phrases. A similarity check catches outputs that are too close to others in the batch.

Each guardrail is a gate. An output must pass all gates to proceed to human review. The gates are cheap — most are programmatic string matching and comparison. They catch the easy failures before a human ever sees them.

Layer 3: Human Review

The final layer is a human editor who understands the domain. In this case, someone who knows luxury fashion — what sounds right for a Marni cardigan versus a McQueen blazer.

Human review catches what guardrails can't: tone that's technically correct but feels wrong. Descriptions that are accurate but uninspiring. Subtle mismatches between the product's visual identity and the written description.

The key is that human review is the final pass, not the first. By the time a human sees the output, constraints have shaped it and guardrails have filtered the obvious failures. The human's job is editorial judgment, not error correction.

The Iteration Cycle

Thirty iterations sounds like a lot. Here's what each round typically addresses:

Iterations 1-5: Basic output quality. The model generates descriptions that are correct but generic. Voice is flat. Adjustments: tighter brand voice rules, more specific examples in the prompt.

Iterations 6-15: Edge case handling. The model struggles with unusual product types (accessories, fragrance, homeware vs. clothing). Adjustments: category-specific prompt branches, additional constraints per product type.

Iterations 16-25: Consistency at scale. Individual outputs are good, but across thousands, patterns emerge — repetitive phrases, inconsistent capitalisation of brand terms, varying approaches to sizing information. Adjustments: duplication detection, format standardisation, terminology lists.

Iterations 26-30: Production polish. The gap between "AI-generated" and "editorially published" closes. Adjustments are subtle: sentence rhythm, paragraph flow, the balance between information density and readability.

Each iteration requires generating a batch, reviewing it, identifying the pattern that needs fixing, updating the constraints or guardrails, and running the batch again. It's engineering work, not prompt tweaking.

The Principle: Constraints Beat Creativity

Anthropic's prompt engineering guidance covers this principle directly. Structured output with constraints beats creative freedom. The more you define what the output must be, the better it gets.

This applies beyond product descriptions:

- Analysis reports — define the structure (executive summary, findings by domain, prioritised actions), the tone (direct, no hedging), and the format (short paragraphs, concrete before abstract). The output is dramatically better than "analyse this data and write a report."

- Email campaigns — define the hook structure, CTA placement, character limits, and forbidden phrases. The AI produces emails that need light editing, not rewrites.

- Ad copy — define the headline format, value proposition hierarchy, and platform-specific constraints (character limits, emoji policy, CTA options). The output is production-ready in fewer iterations.

The universal pattern: every time you're tempted to give the AI more freedom, give it more structure instead.

Why This Matters for Buyers of AI Services

If an agency or vendor shows you an AI demo and the output looks great, ask one question: "Show me the output at position five thousand."

The demo shows the best case. Production is about the average case — and more importantly, the worst case. A system that produces brilliant output 95% of the time and hallucinated garbage 5% of the time is not a 95% solution. It's a system that damages trust one in twenty times.

The quality of an AI production system is measured by:

- Floor, not ceiling. What's the worst output it produces, not the best?

- Guardrails, not prompts. What automated checks prevent bad output from shipping?

- Human integration, not human replacement. Where in the pipeline does a human add judgment?

- Iteration count, not generation speed. How many rounds of refinement went into the prompt architecture?

FAQ

Q: Can't I just write a really good prompt and skip the iteration?

A: For a single output, yes. For thousands of outputs across diverse product types, no. The edge cases that a single prompt misses accumulate at scale. The iteration cycle is how you discover and address those edge cases systematically.

Q: How much does the human review layer cost?

A: It depends on volume and domain complexity. For luxury fashion, a skilled editor reviews roughly fifty to a hundred descriptions per hour at the final-pass stage (after constraints and guardrails have done their work). Without those layers, the same editor would spend significantly more time on error correction instead of editorial judgment.

Q: Does this approach work for non-content AI applications?

A: The principle is universal. Any AI system operating at scale benefits from the three-layer approach: constraints (define what good looks like), guardrails (automated QA), and human review (domain judgment on the residual). This applies to data analysis, code generation, customer service responses — anywhere AI output affects trust.

Q: What model do you use?

A: Claude. But the model choice matters less than the engineering around it. The constraints, guardrails, and iteration cycle we've described would improve output quality on any model. The 80% that matters is not which model you pick — it's the production engineering that surrounds it.

Q: How do you handle multiple languages?

A: Each language gets its own constraint set — not just translation but localisation of tone, idiom, and cultural context. The guardrails (factual accuracy, length validation) work across languages. Brand voice scoring requires language-specific rubrics. Human review requires native or near-native speakers for each target language.